Ethical Tech is Good for Business

In a world filled with AI, Trust and Safety are more important than ever

People are in a state of equal parts fear and excitement about AI. Companies are operating in a world with heightened economic and political uncertainty.

Amidst this background, recent headlines make sense - businesses and people are choosing AI platforms based on trust and safety issues.

The trust gap is real. A recent Harvard Business Review study found that “Only 6% of companies fully trust AI agents to autonomously run their core business processes”. As a result, many companies are restricting AI use and the message is clear: businesses want AI they can trust.

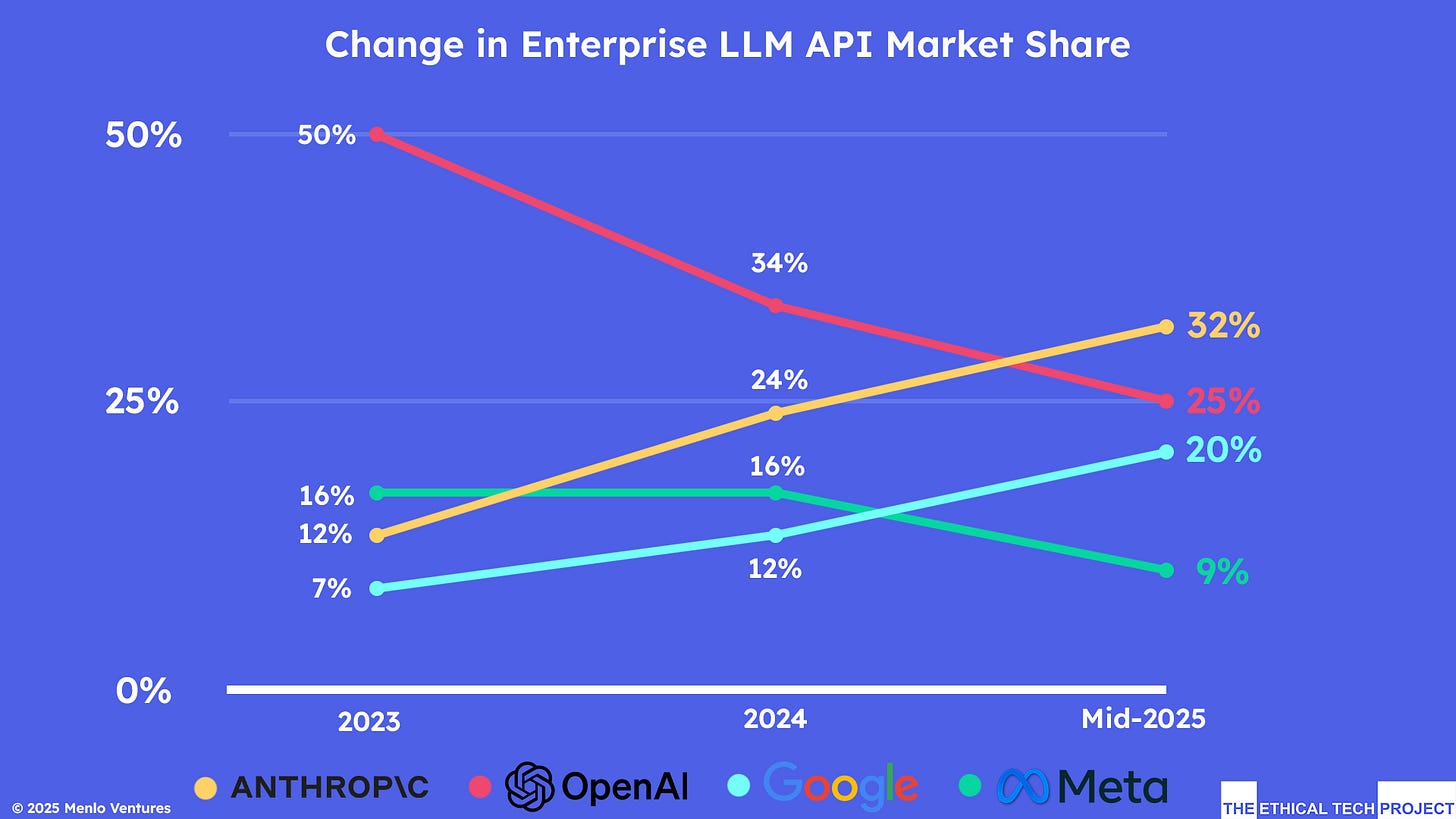

Market data backs this up. Anthropic Claude’s market share of LLM API calls from enterprise customers is steadily growing—according to reports from Menlo Ventures. (Menlo is an investor in Anthropic). In July 2025, Menlo released the graph below showing Claude’s growing enterprise market share.

On December 9, 2025, Menlo Ventures published its 2025 The State of Generative AI in the Enterprise with these key findings:

Anthropic now commands 40% in enterprise LLM API market share (more than triple its 12% share in 2023)

Google climbed to 21% (a 3x increase from 7% in 2023)

OpenAI’s share fell to 27%

What’s driving this shift?

The Harvard Business Review study cites “Security and privacy worries loom largest as barriers to wider adoption.”

The Menlo Ventures report cites Claude’s code performance and control features.

Fortune’s December 2, 2025 article, however, is clear with its headline: “Anthropic’s Safety First Approach Won Over Big Business”

Here are some of the safety features cited in this article:

Constitutional AI: Claude is trained with a written “constitution”—a set of principles that guides its behavior and outputs.

Multi-Layer Security: that screens out dangerous information from training data, uses “constitutional classifiers” to detect jailbreaking attempts, and monitors outputs for constitutional compliance in real-time.

Reliability which delivers lower hallucination rates

Active Threat Intelligence: Dedicated teams probe for vulnerabilities and investigate suspicious usage patterns

There is plenty of debate about the safety levels of AI models. In an article published by Mashable on December 3, 2025, the headline reads: “AI safety report: Only 3 models make the grade. Gemini, Claude, and ChatGPT are top of the class — but even they are just C students.”

This is according to an AI Safety Index published by the Future of Life Institute which is led by MIT professor Max Tegmark.

So what is the truth? Are some AI models safer than others? Are they all unsafe? Are some AI companies just better at PR and brand positioning? These are important questions to answer but regardless of these answers, it is clear that AI Safety is an important issue that every business needs to actively address.

The bottom line: Safety isn’t just an ethical imperative—it’s a competitive advantage. Whether you are a company selling AI systems, or a company incorporating AI into your operations and services, you have a greater chance to succeed if you build with trust, transparency, and security at their core of your AI work.

In an uncertain world, responsible AI isn’t just the right thing to do, it’s the best business strategy.

At the Ethical Tech Project, we are committed to helping business leaders think about issues such as data privacy, transparency, safety and human control of AI systems. If you care about these critically important issues, let’s collaborate!

Robert Levitan is the new Co-Chair of ETP’s Board of Directors. A longtime digital entrepreneur, he has helped shape multiple phases of the Internet’s development, from early online communities to advanced e-commerce and enterprise solutions. Over the past thirty years, he has founded, scaled, and advised companies such as iVillage, Flooz, Pando Networks, and various venture-backed startups, establishing himself as a thoughtful leader at the crossroads of technology, society, and governance. His recent work focuses on the societal impacts of AI and the importance of robust, transparent frameworks that safeguard individuals and communities as technology progresses.

Here’s what we’ve been reading and listening to, and you should be too:

🤔 Attitudes on AI

The Prof G Pod – Scott Galloway: The AI Dilemma — with Tristan Harris. Tristan Harris, former Google design ethicist and co-founder of the Center for Humane Technology, joins Scott Galloway to explain why children have become the front line of the AI crisis. They unpack the rise of AI companions, the collapse of teen mental health, the coming job shock, and how the U.S. and China are racing toward artificial general intelligence. Harris makes the case for age-gating, liability laws, and a global reset before intelligence becomes the most concentrated form of power in history.

Walter Quattrociocchi: New (BRUTAL) paper out “Epistemological Fault Lines Between Human and Artificial Intelligence” When text sounds right, we stop asking whether it’s true. Large Language Models are increasingly used to evaluate, summarize, and even judge information. The common assumption is that, as long as their outputs align with human judgments, they can safely take on epistemic roles.

💸 Money

The New York Times: Wall Street Is Shaking Off Fears of an A.I. Bubble. For Now. The valuations of some artificial intelligence companies are approaching those of the dot-com boom. But investors worry that pulling money from today’s market risks future gains.

The Guardian: Most people aren’t fretting about an AI bubble. What they fear is mass layoffs. Artificial intelligence could make income inequality even worse and create a new underclass. Governments and society must take action

New York Post: Instacart is charging different prices to different customers — on the same grocery items in the same stores, bombshell study reveals Groundwork, a consumer advocacy group, said Instacart’s pricing algorithm could lead to shoppers forking over an extra $1,200 on groceries each year.

Center for Humane Technology: Advertising is Coming to AI. It’s Going to Be a Disaster. A 22-year-old has an earnest query for her AI chatbot: “How do I really impress in my first job interview?” To which the AI helpfully responds, “First, you need to think about your clothes and what they communicate about you and your qualifications.” Now, is this sound advice for kicking off a productive career-coaching session — or is it sponsored content?

🧠 Mental Health

The Jed Foundation: When Young People Turn to AI for Emotional Support: JED’s Response to the APA’s New Advisory. Artificial Intelligence holds promise for use in mental health support but we must apply the same guardrails as in other health interventions

Robbie Torney: Today Common Sense Media released findings from our comprehensive risk assessment of AI chatbots and teen mental health support. The results are clear: these systems are fundamentally unsafe for the way millions of young people are already using them.

Data Workers’ Inquiry: The Emotional Labor Behind AI Intimacy. Imagine confiding your most private fantasies to what you believe is an unfeeling algorithm that cannot judge or remember. Now imagine that on the other side of that conversation is a man sitting in a one-room home in Nairobi, working through the night while his wife and children sleep. That man is Michael, and this is his story.

📃 Policy and Regulation

The Guardian: World ‘may not have time’ to prepare for AI safety risks, says leading researcher. AI safety expert David Dalrymple said rapid advances could outpace efforts to control powerful systems

Erie Meyer: WHEW. A bipartisan group of 42 state Attorneys General are standing up against companies pushing dangerous, sycophantic AI, and they’re asking for everything from individual executive accountability to worker protections.

The New York Times: Trump Signs Executive Order to Neuter State A.I. Laws. The order would create one federal regulatory framework for artificial intelligence, Mr. Trump told reporters in the Oval Office.

Defense News: Pentagon taps Google Gemini, launches new site to boost AI use Hegseth said the U.S. must stay ahead of adversaries who are working to take advantage of rapid technology advancements, like the development of AI.

🍎 Education

Mashable: AI safety experts say most models are failing. Gemini, Claude, and ChatGPT are top of the class — but even they are just C students.

🩺 Medicine

The Guardian: ‘Dangerous and alarming’: Google removes some of its AI summaries after users’ health put at risk. Guardian investigation finds AI Overviews provided inaccurate and false information when queried over blood tests.

🎓 Research and Resources

Anthropic: Claude for Nonprofits. Nonprofits tackle some of society’s most difficult problems, often with limited resources. In partnership with the global generosity movement GivingTuesday, Anthropic is launching Claude for Nonprofits to help organizations across the world maximize their impact.

Tech Crunch: A new AI benchmark tests whether chatbots protect human well-being. AI chatbots have been linked to serious mental health harms in heavy users, but there have been few standards for measuring whether they safeguard human well-being or just maximize for engagement. A new benchmark dubbed HumaneBench seeks to fill that gap by evaluating whether chatbots prioritize user well-being and how easily those protections fail under pressure.